See it in action.

A private RAG chatbot that indexes every document — handbooks, SOPs, runbooks — and answers employee questions with source citations, confidence scores, and multi-turn memory, streamed live into the browser.

Every company has the same hidden problem: critical knowledge locked in Drive folders, Slack threads, and the heads of three senior employees.

The answer is in a Google Doc from 2023. Nobody knows which folder, which version, which section.

New hires ask the same things. Managers re-answer weekly. No self-serve path to the answer.

One senior employee leaves and a decade of process knowledge walks out the door.

Conflicting answers from different docs. No confidence signal. No citations. Just "I think it's…"

A private RAG chatbot that parses, chunks, embeds, and indexes your documents — then answers with the exact page, section, and confidence score.

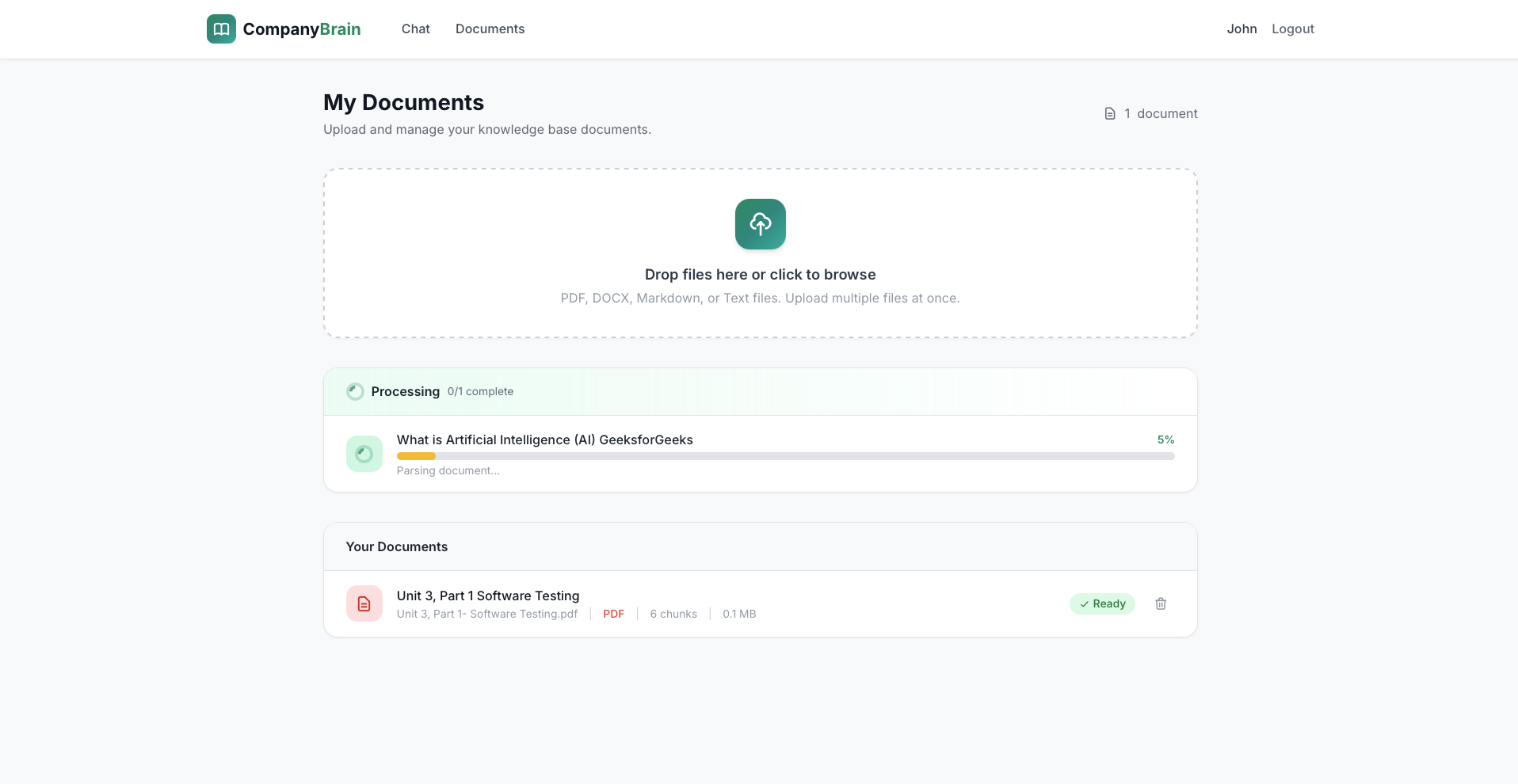

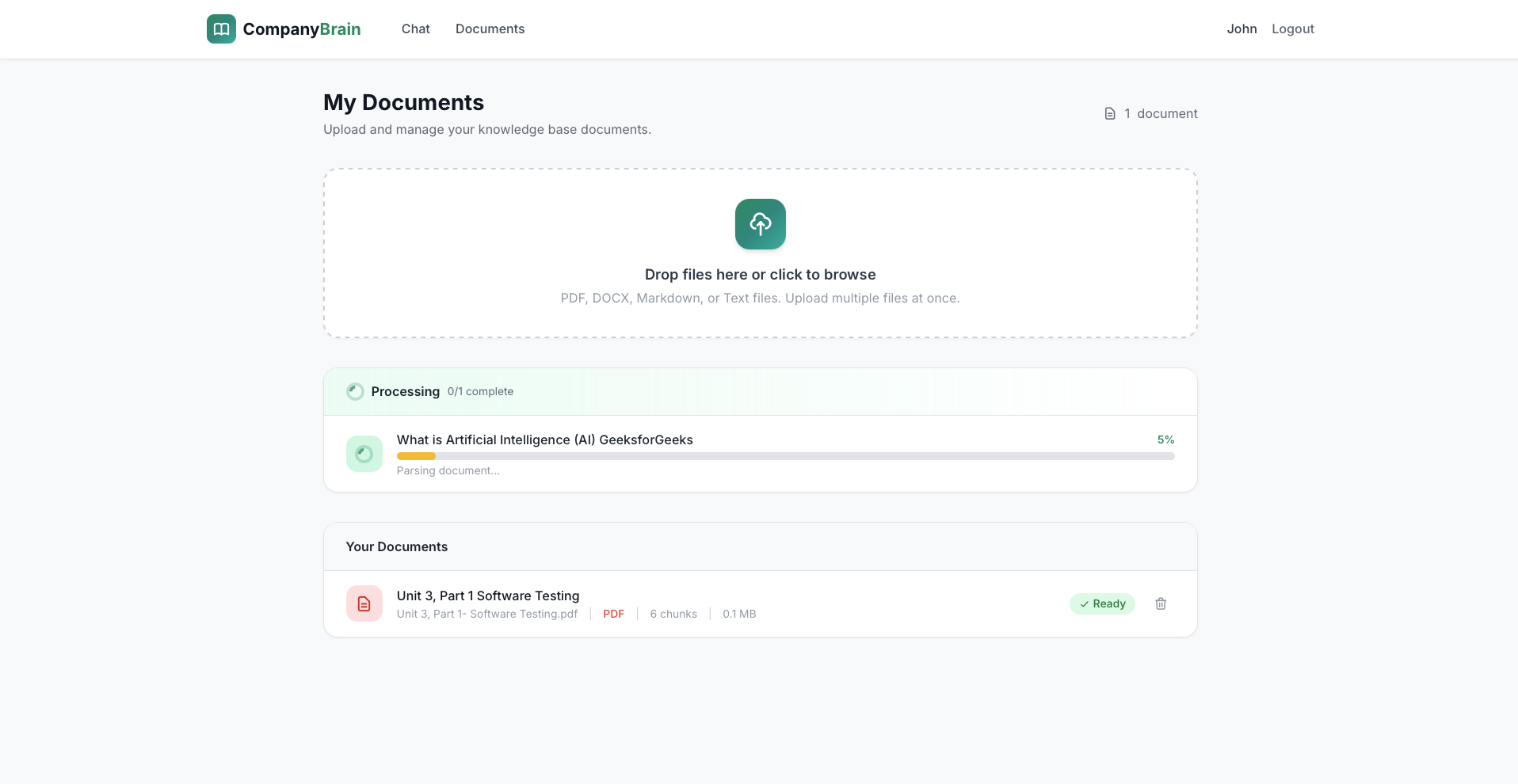

PDF, DOCX, Markdown, or plain text. Async background pipeline with live 0–100% progress.

LlamaParse for structured PDFs, smart 800-char overlapping chunks, OpenAI text-embedding-3-small vectors.

pgvector <=> operator returns top-5 most relevant chunks. User-scoped, status-filtered.

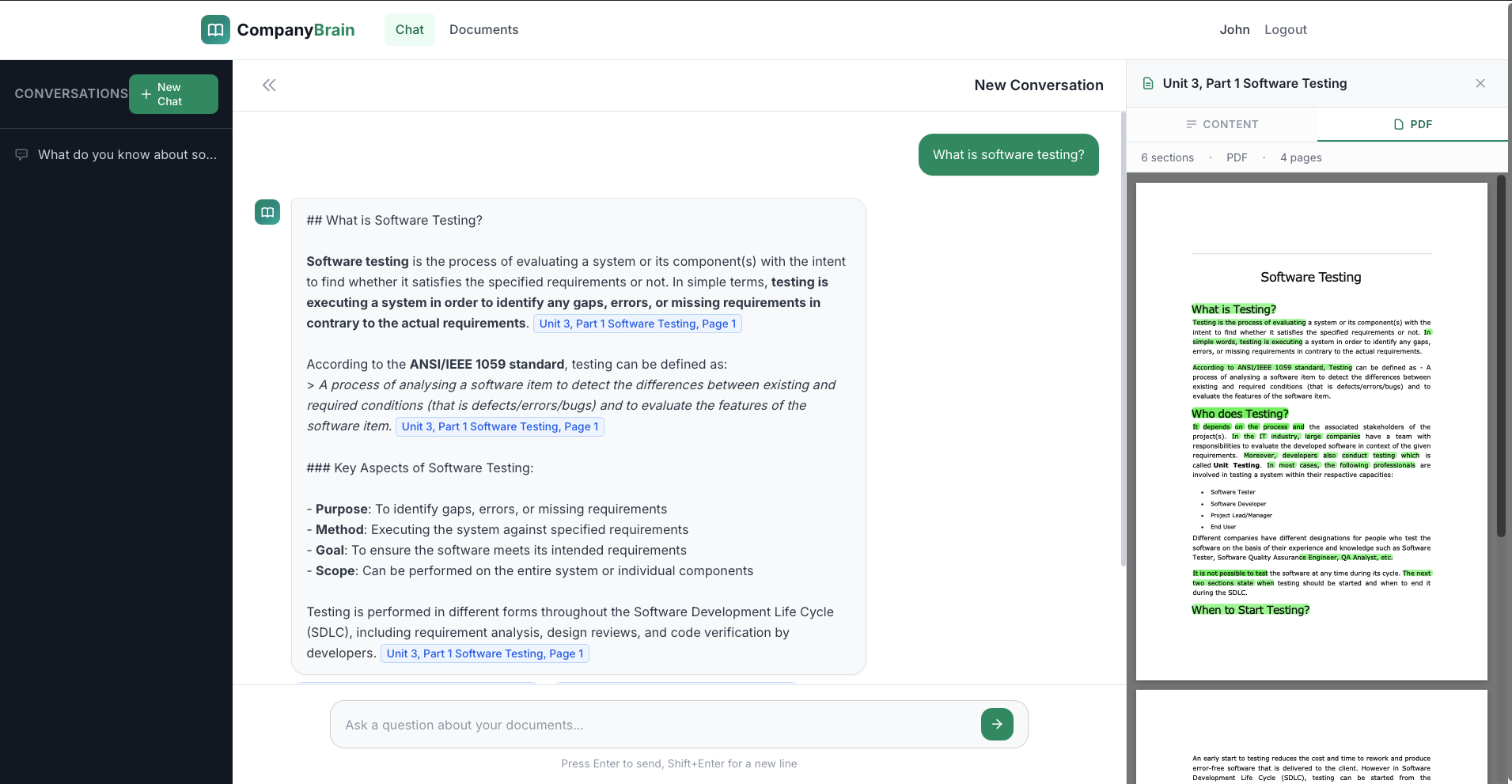

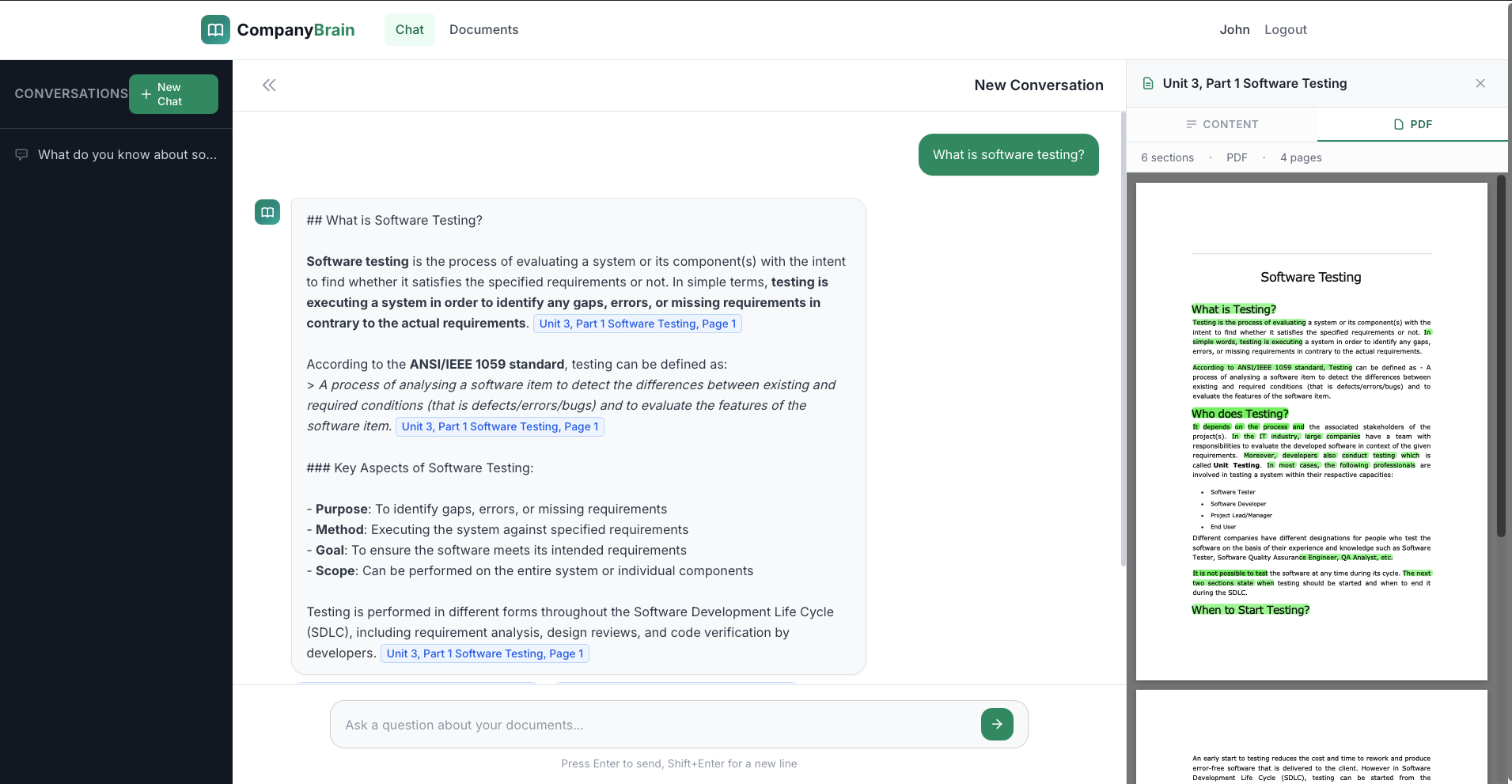

Context-grounded generation: answer + confidence + source references. Streamed token-by-token via SSE.

Last 10 messages in context. "Tell me more" and "How does that compare to…" just work.

# Full RAG loop — embed → retrieve → generate

async def handle_chat_stream(

query: str,

conversation_id: UUID,

user_id: UUID,

):

# 1. Embed the question

query_vec = await embedding_service.get_embedding(query)

# 2. Cosine similarity search (pgvector)

chunks = await retrieval_service.search_similar_chunks(

embedding=query_vec,

user_id=user_id,

top_k=5,

)

# 3. Build context from top-5 chunks

context = build_source_context(chunks)

history = await get_last_messages(

conversation_id, limit=10,

)

# 4. Stream answer from Claude

async for token in ai_service.stream_answer(

query=query,

context=context,

history=history,

):

yield f"data: {token}\n\n"

# 5. Persist message + sources

await store_message(

conversation_id, answer, sources, confidence,

)Two pipelines — ingestion writes vectors; query reads them. Both fully async, streamed, and user-scoped.

PDF (LlamaParse structured), DOCX (heading detection), Markdown (header split), TXT. Async background processing with live 0–100% progress bar.

800-char max, 100-char overlap, sentence-boundary breaks. Section title + page number tracked per chunk for precise citations.

Vector storage lives inside PostgreSQL. No Pinecone or Weaviate. <=> cosine operator for top-k retrieval in a single query.

Token-by-token via SSE with cursor animation. Grounded to context only — "Answer ONLY from provided sources." JSON with confidence score.

Every answer cites the doc, page, and section. Click a citation → annotated PDF with green-highlighted source text via PyMuPDF.

Last 10 messages injected into context. Conversation persistence, list, load, delete. Follow-ups just work.

User/doc/chunk/message counts. Top-5 cited documents. Recent queries (trending topics). Document health dashboard.

Every document, chunk, conversation, and query filtered by user_id. OTP passwordless auth. Multi-tenant ready.

Local filesystem for dev, AWS S3 or DigitalOcean Spaces for production. Signed URLs for secure temporary access.

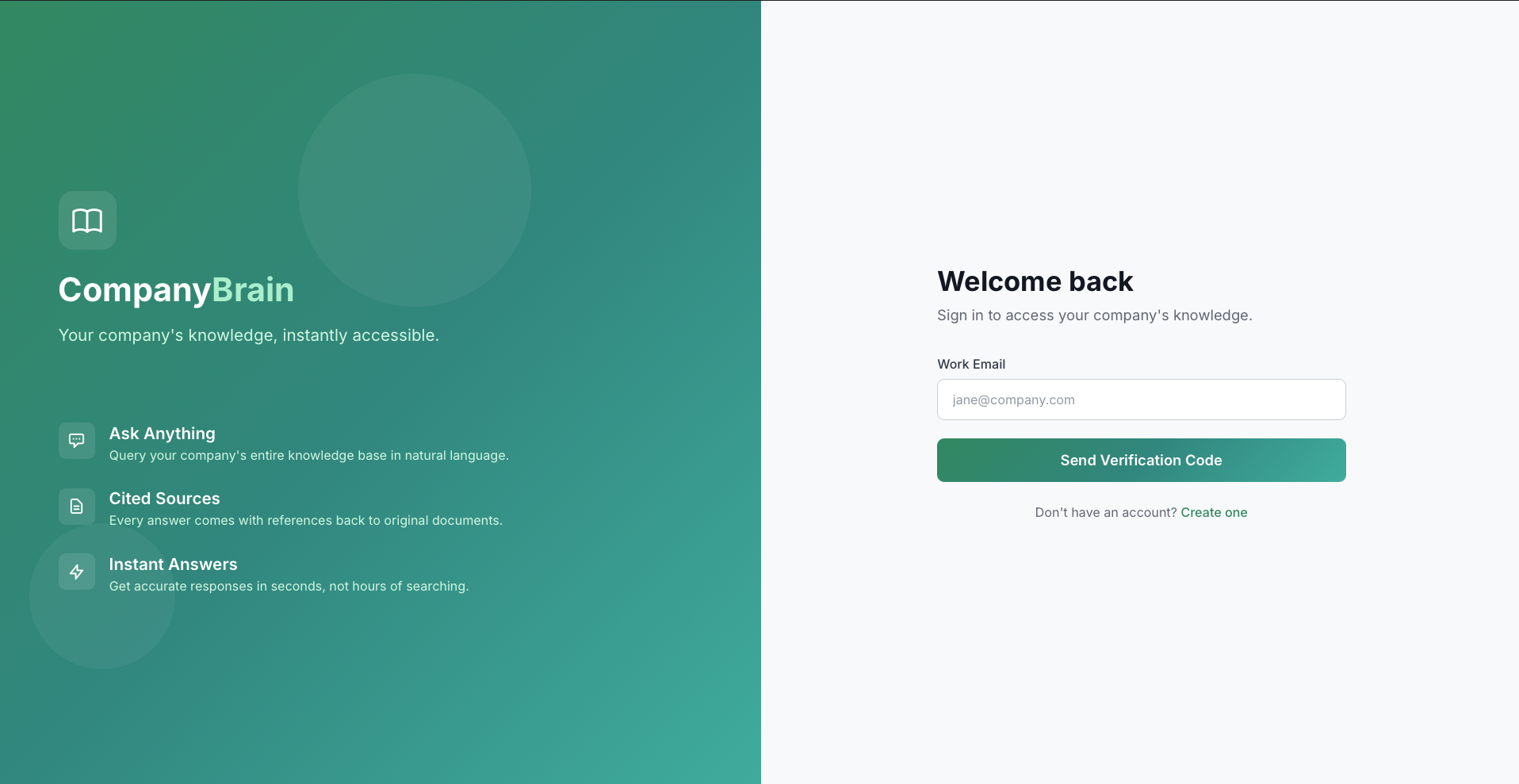

Enter email + name → 6-digit OTP → verified. No passwords, no friction.

Drag in the employee handbook, SOPs, runbooks. Live progress bar: parse → chunk → embed → ready.

"What's our PTO policy?" — embedded, searched across all chunks, top-5 retrieved by cosine similarity.

Claude generates, token-by-token. Sources show doc name, page, section, and similarity score.

Open annotated PDF — the exact text that Claude used is green-highlighted via PyMuPDF annotations.

"Can I carry over unused days?" — conversation memory keeps context. 10-message sliding window.

Every policy, every SOP, every runbook — indexed, searchable, citable. New hires self-serve from day one. Managers stop answering the same question twice.